CPU Working Principle Explained: How Your Computer's Brain Operates

You press a key, click a mouse, and things happen instantly on your screen. Ever stopped to think about the tiny chip making it all possible? That's the Central Processing Unit, or CPU—the brain of your computer. In this guide, I'll walk you through exactly how a CPU works, from the basic fetch-decode-execute cycle to the nitty-gritty of components like the ALU and control unit. I've spent over a decade tinkering with hardware, and I'll share some insights that most beginners miss, like why clock speed isn't everything. Let's dive in.

What's Inside This Guide

The Heart of Computing: What is a CPU?

At its core, a CPU is a microprocessor that executes instructions from software. Think of it as a conductor in an orchestra—it doesn't play the instruments but tells them what to do. CPUs have evolved from simple calculators in the 1970s to today's multi-core beasts, but the fundamental principle remains: process data based on programmed commands.

I remember my first computer had a single-core CPU that struggled with basic tasks. Now, even budget phones pack more processing power. The CPU sits on the motherboard, connected to memory and other components via buses. Its job? To fetch instructions from RAM, decode them into actions, execute those actions, and store results. It's a relentless loop that happens billions of times per second.

The Fetch-Decode-Execute Cycle: The CPU's Core Rhythm

This cycle is the heartbeat of CPU operation. It's a three-step dance that repeats for every instruction.

Step 1: Fetch

The CPU uses a program counter (a special register) to point to the next instruction in memory. It fetches that instruction via the memory bus. If the data isn't in cache, it has to go all the way to RAM, which slows things down—a common bottleneck I've seen in older systems.

Step 2: Decode

Once fetched, the instruction is sent to the decoder. This unit figures out what needs to be done: is it an arithmetic operation, a data move, or a jump to another part of the program? The decoder breaks it down into signals for other parts of the CPU.

Step 3: Execute

Here, the Arithmetic Logic Unit (ALU) or other units spring into action. If it's a math problem, the ALU crunches numbers; if it's a memory access, the control unit manages it. Results are stored back in registers or memory.

This cycle isn't always linear. Modern CPUs use pipelining to handle multiple instructions at once, like an assembly line. But if one stage stalls, the whole pipeline can suffer—that's why branch prediction matters, something many overlook.

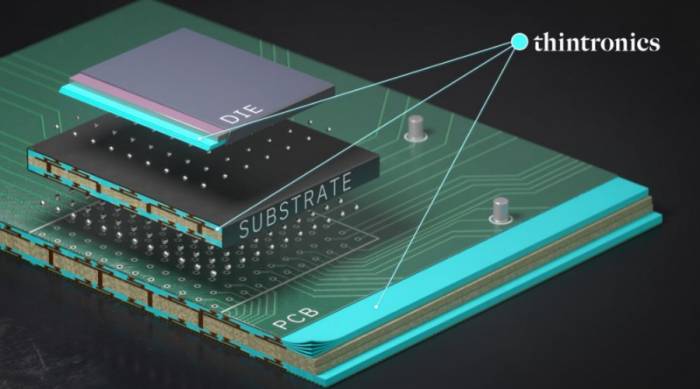

Inside the CPU: Key Components and Their Roles

Let's peel back the silicon and look at the major players. Each part has a specific job, and they work together seamlessly.

| Component | Function | Real-World Analogy |

|---|---|---|

| Control Unit (CU) | Directs operations, manages data flow between components, and coordinates the fetch-decode-execute cycle. | Like a traffic cop, ensuring everything moves in sync. |

| Arithmetic Logic Unit (ALU) | Performs mathematical calculations (add, subtract) and logical operations (AND, OR). | The calculator in your brain, handling number crunching. |

| Registers | Small, fast storage locations inside the CPU for holding data, addresses, or intermediate results. | Sticky notes on your desk—quick to access but limited space. |

| Cache Memory | High-speed memory that stores frequently used data to reduce access time to RAM. | A backpack you carry instead of going to your locker every time. |

| Clock | Generates regular pulses to synchronize operations; measured in GHz (billions of cycles per second). | A metronome keeping the rhythm for the orchestra. |

Cache is a big deal. In my experience, upgrading from a CPU with 4MB cache to one with 8MB made a noticeable difference in gaming, even with the same clock speed. L1, L2, and L3 caches act as a hierarchy—L1 is fastest but smallest, while L3 is slower but larger. Miss this, and you might blame the wrong part for slowdowns.

From Silicon to Speed: How Clock Speed and Cores Affect Performance

People obsess over GHz, but it's just one piece of the puzzle. Let's break down what really matters.

Clock Speed: Measured in gigahertz, it's how many cycles the CPU can complete per second. A 3.5 GHz CPU can handle 3.5 billion cycles per second. Higher GHz often means faster processing, but only if other factors like architecture and cache are optimized. I've seen 3.0 GHz chips outperform 3.5 GHz ones due to better design—Intel's Core i7 vs. older Pentiums, for example.

Cores and Threads: Modern CPUs have multiple cores, each acting like a separate processor. Dual-core, quad-core, even octa-core chips are common. More cores allow parallel processing, great for multitasking or video editing. Threads, managed by technologies like Hyper-Threading, let each core handle multiple tasks simultaneously. But here's a catch: not all software uses multiple cores efficiently. Games from a few years ago might barely use two cores, wasting the potential of an eight-core CPU.

Case Study: Compare an Intel Core i5-10600K (6 cores, 4.1 GHz boost) with an AMD Ryzen 5 3600 (6 cores, 4.2 GHz boost). On paper, they're similar, but in benchmarks, the Ryzen often leads in multi-threaded tasks due to its microarchitecture and larger cache. It shows that specs alone don't tell the whole story—you need to look at real-world performance.

Other Factors: Instruction set architecture (like x86 or ARM), thermal design power (TDP), and manufacturing process (e.g., 7nm vs. 10nm) also play roles. A 7nm CPU can be more power-efficient and faster, as seen in Apple's M1 chips. According to industry reports from IEEE, smaller transistors reduce latency and heat, boosting overall efficiency.

Common Misconceptions and Expert Insights

After years in the field, I've noticed patterns where even tech-savvy users get tripped up.

Myth 1: More GHz always means better performance. False. Clock speed is just the pace; what matters is how much work gets done per cycle (IPC, or Instructions Per Cycle). A CPU with higher IPC can outperform a higher-clocked one. For instance, AMD's Zen architecture often has better IPC than older Intel designs at similar clock speeds.

Myth 2: All cores are created equal. Not really. Physical cores differ from logical threads, and some applications favor one over the other. Also, thermal throttling can disable cores under heat, a problem in poorly cooled laptops. I've fixed systems where adding a better cooler unlocked hidden performance.

Myth 3: CPU is the only bottleneck. Often, it's RAM or storage. If your CPU is waiting for data from a slow hard drive, it'll idle. Upgrading to an SSD can feel like getting a new CPU. I tell clients to check task manager—if CPU usage is low but system is slow, look elsewhere.

My take? Don't just chase specs. Consider your use case. For gaming, a fast single-core performance might trump many cores. For servers, cores and reliability matter more. And always check benchmarks from trusted sources like AnandTech or Tom's Hardware.

FAQ: Your CPU Questions Answered

Understanding CPU working principle isn't just academic—it helps you make smarter buying decisions and troubleshoot issues. From the fetch-decode-execute cycle to the interplay of components, every detail matters. Next time you shop for a computer, look beyond the GHz and cores; consider cache, architecture, and real-world tests. If you're curious about specific models, drop a comment below. Happy computing!

Comments

Share your experience